Oh My OpenAgent Review: A Multi-Agent Alternative to Claude Code (2026)

An honest one-month review of OpenCode + Oh My OpenAgent, covering ultrawork, agent roles, pricing, failure modes, and whether it is ready to replace Claude Code.

Quick take

Start with this judgment

27 min readBottom line

An honest one-month review of OpenCode + Oh My OpenAgent, covering ultrawork, agent roles, pricing, failure modes, and whether it is ready to replace Claude Code.

- Best for

- Readers comparing cost, capability, and real limits before choosing a tool

- What to check

- Oh My OpenAgent · OpenCode · Claude Code alternative

- Watch out

- Pricing and features can change, so confirm with the official source too.

3 key points

- For Korean developers who are burdened by Claude Code Max’s $200/month burden and Anthropic’s OAuth blocking on 2026-01-09, Oh My OpenAgent (OmO) is one of the most realistic alternatives with more than 12 types of agents on top of OpenCode.

- As a result of one month’s actual measurements, there are overwhelming cases such as clearing 8,000 ESLint warnings and 45,000 lines of Tauri app SaaS conversion, and balanced limitations such as $438 infinite loop billing incident and 3 times the token consumption in Glukhov comparison.

- You can try it now for side projects and multi-model experiments, but for Korean production backend and Claude Max heavy users, we recommend watching for one more quarter due to stability and account suspension risks.

목차

- Oh My What does OpenAgent ultimately do?

- Would it be okay if I switched to Claude Code instead?

- ultrawork Can one line really make 8,000 ESLint warnings go away?

- Sisyphus·Prometheus·Oracle… How do these agents divide labor?

- Hash-Anchored Edit makes stale-line errors 0%?

- After using it for a month, what was the limit?

- How should I look at licenses, telemetry, and costs?

- How do you evaluate the Korean community?

- Is it worth introducing it now or should I wait a little longer?

1. What does Oh My OpenAgent ultimately do?

If you are a developer who started Claude Code Pro at $20, then switched to Max $100~$200, and then suddenly received the message “This token cannot be used outside of Claude Code CLI.” on 2026-01-09, this article is for you. Oh My OpenAgent (hereinafter referred to as OmO, Oh My Open Agent in Korean) is a Korean multi-agent harness that reached 200,000 downloads in just one month (Source: [Nowadays IT Wishket] (https://yozm.wishket.com)).

It is an “Orchestration Layer” placed on top of OpenCode.

OmO is not an “AI model”. It does not perform any inference or code generation on its own. OmO is a plug-in built on top of OpenCode, an open source coding CLI, and provides more than 12 predefined agents (Sisyphus, Prometheus, Oracle, Atlas, Hephaestus, etc.), model routing, and workflow commands all at once. In other words, it is a harness that has been designed in advance, “Which model to put in which role, and what context to give to which command.” If you are not familiar with the harness concept itself, it will be easier to understand if you first read [AI apps are completed with harnesses, not prompts] (/en/ai/harness-engineering-ai-apps).

The GitHub repository is v3.17.x as of 2026-04-30 and has 55,200 stars and 4,500 forks (Source: oh-my-openagent GitHub). Cumulative downloads are approximately 1.6M+, which is an unusual scale for a Korean solo developer project.

Tools run by maintainer Kim Yeon-gyu

The maintainer is YeonGyu Kim, an AI agent engineer at Indent Corporation. In his Threads post, he recalled, “What was an experiment in the corner of a room became a plug-in and a component that created a large fan base in one product.” (Source: @yeon.gyu.kim, 33.1K views). README.ko.md contains a self-confession saying “Personal token value of approximately $24,000 (approximately 30 million won) was invested,” emphasizing “Verification of actual use, not a hobby project.”

Correction for package name change

Old Korean materials (especially PyTorchKR posts before 2026-01) are marked as oh-my-opencode. Around 2026-02, the maintainer prioritized the name oh-my-openagent as a plugin entry, and the package name oh-my-opencode is maintained for backward compatibility. The config file recognizes both. In the text, it is abbreviated as OmO.

Part 1 is a review of one month of actual use. Operational settings such as installation method, model key issuance, OpenCode setup, and telemetry disabling will be covered step by step in Part 2. Part 1 is seen as an article to decide “Can I switch to OmO?”

2. Would it be okay to switch to Claude Code instead?

This is the most frequently asked question by OmO users, and the answer is “conditional example”. The game rules have changed due to the 2026-01-09 incident, but that doesn’t mean all users have to move immediately.

2026-01-09 Anthropic OAuth blocking incident

2026-01-09 Anthropic blocked subscription OAuth tokens on the server side outside of the official Claude Code CLI. OpenCode·Roo Code·Cline users lost their work screens at the same time that day (Source: nxcode opencode-alternative-2026). Yeon-gyu Kim wrote “Anthropic blocked OpenCode because of us… They want you locked in” as marketing copy in README. This statement is the maintainer’s position, and Anthropic’s official announcement of the reason has not been separately confirmed.

There are two points. First, the usage pattern of subscriptions from Claude Code external clients was effectively blocked. Second, the bypass itself may violate ToS, so Korean users bear the risk of account suspension. Apiyi’s Korean guide also warns that “Official Claude Code Max risks account suspension for domestic users” (Source: Apiyi). To check the price and features of Claude Code itself, refer to [Completely Conquering Claude Code: Installation, Price, and Usage (2026)] (/en/ai/claude-code-review).

Cost Structure Comparison

OmO’s recommended bundle is a combination of ChatGPT $20 + Kimi Code $19 + GLM Coding $10 (or OpenCode Go $10). There is an explicit cost-savings motivation compared to the Claude Max $200. In the scenario calculated by Apiyi assuming a workload of 10 billion tokens for 8 months, an example of reducing API $15,000 to Max $800 by 93% is cited, but this is assuming heavy users before Anthropic was blocked.

| item | Claude Code Max | OmO Recommended Bundle | OmO free fallback |

|---|---|---|---|

| monthly fixed cost | $100~$200 | $49~$59 | $0 |

| flagship model | Claude Opus 4.7 | GPT-5.4 + Kimi K2.6 + GLM 5 | Kimi K2.5-free, MiniMax M2.7-free, big-pkl(GLM 4.6 free) |

| Korean User ToS Risk | Concerns about account suspension after Anthropic blocking | Distributed by vendor | Official Free Tier |

| multi agent | Basic Sub-agent Step 1 | Sisyphus·Prometheus·Oracle 12+ | Same (only model falls back) |

| Code block 1-line command | claude /agents | ulw / ultrawork | ulw |

However, before switching based solely on cost, you should read H2-3 and H2-6 first. There is an objective measurement that shows that OmO actually uses up to 3 times more tokens for simple tasks.

”A person who can change” vs “person who has to wait”

Who is best to switch to first: Side projects, multi-model experimental researchers, and people who already use OpenCode on a daily basis. Conversely, those who have to wait: Korean production backend/financial domains, heavy users who are already using Claude Max well, and users who are cost-sensitive and mostly do short tasks. The entire decision matrix is summarized in H2-9.

3. Ultrawork Can 8,000 ESLint warnings really disappear with just one line?

ultrawork is OmO’s signature command. ulw <goal> is famous for its copy of automating planning, distribution, execution, and verification in one line, but looking at the actual data, the two sides are clear.

3 overwhelming success stories

There are three user reviews posted on OmO’s official site (Source: ohmyopenagent.com).

- Jacob Ferrari: “Solved 8,000 ESLint warnings in one day with Oh My Opencode.”

- James Hargis: “Converted a 45,000-line Tauri app into a SaaS web app overnight.”

- Quant Researcher B: “7 days of work on Claude Code was 1 hour of Sisyphus.”

What the three cases have in common is “There are many branches and repetitions, but the transformation pattern is consistent.” It’s work. OmO’s parallel division structure stands out in tasks where LLM can be homomorphically applied to thousands of places once a pattern is set, such as batch organizing ESLint rules, migrating identical component patterns, and batch refactoring of data structures.

Rebuttal from 4th Path review: “Starter costs are expensive”

If you use the same command for a short task, the results will be different. As the 4th Path review points out (Source: 4th Path):

“ultrawork activation alone consumes 15,000–25,000 tokens on context setup.”

“Using ultrawork for everything is as inefficient as using a sledgehammer to hang a picture frame.”

In other words, since the command call itself consumes 150,000 to 25,000 tokens, it is rather detrimental to short tasks such as “Fix one function.” ulw is a heavy command, and it is more efficient to use the general OpenCode subagent flow for light commands.

Glukhov comparative measure: OmO loses in single document migration

Glukhov’s comparative review ran one single document migration simultaneously between OmO and native OpenCode (Source: Glukhov OmO Experience).

| Measuring Items | Genuine OpenCode | Oh My OpenAgent | difference |

|---|---|---|---|

| pass rate | 73.1% | 69.2% | OmO 3.9 pp low |

| Number of LLM requests | Episode 27 | Episode 96 | About 3.5 times |

| Total token consumption | reference value | about 3 times | 3x |

| Perceived task completion time | faster | slower | Limited to a single simple task |

Glukhov himself stated in his conclusion, “That doesn’t mean OmO is bad for all tasks, but it’s overkill for simple single tasks.” This is information that is often missing from the Korean community.

ulw writes “Only when the task is clearly large or repetitive”. For modifying a single function, fixing a single line bug, or correcting a single style, it is advantageous in both tokens and time to use the general OpenCode workflow (opencode run, single agent call).

4. Sisyphus·Prometheus·Oracle… How do these agents divide labor?

OmO’s core value is the pre-design of “Which model is used for which task?” More than 12 types of agents are grouped into 4 groups, and each group has a different recommended model.

4-group classification and recommended model

| group | representative agent | 1st ranked model | Korean users fallback |

|---|---|---|---|

| Communicator (conversation/planning) | Sisyphus, Metis | Claude Opus series | Kimi K2.5 → GLM-5 |

| Dual-Prompt | Prometheus, Atlas | Claude Opus series | GPT-5.4 high → Gemini 3.1 Pro |

| GPT-native Deep | Hephaestus, Oracle, Momus | GPT-5.4 | Local replacement not possible, paid service recommended |

| Utility | Librarian, Explore, Sisyphus-Junior | Haiku/Sonnet/Mini | Open model fallback possible |

(Source: agent-model-matching.md)

Why Sisyphus Turns Off Tasks

Sisyphus is OmO’s main controller. When the user enters ulw "Clean up all ESLint warnings from this codebase", Sisyphus first scans the code base, splits the work, receives the branching scenario from Prometheus, and delegates the precise transformation to Hephaestus. This division of labor is what is most different from the Claude Code single agent flow. One velog user commented, “Attractive is that each agent runs tasks in parallel and in the background.” (Source: @takealittletime velog).

Hephaestus and Atlas: lines of precision analysis

Hephaestus is more of a code conversion expert, and Atlas is more of a branching strategist. Both recommend GPT-5.4 high or Claude Opus series, but it is difficult for Korean users to use Opus directly after OAuth is blocked. The combination of Kimi K2.6’s cost-effectiveness is realistic, and this part was discussed in detail in [Kimi K2.6 Complete Analysis — How to Replace Claude Opus 4.7 at 88% Cheaper] (/en/ai/kimi-k2-6-deep-dive).

Utility group: Free fallback possible

Auxiliary agents such as Librarian, Explore, and Sisyphus-Junior run at Haiku/Sonnet/Mini level or in free open models. This is why OmO specifically specified a free fallback bundle (Kimi K2.5-free, MiniMax M2.7-free, big-pkl GLM 4.6 free). However, the mix is stable, with only the Utility group being free and Communicator and Dual-Prompt being paid for.

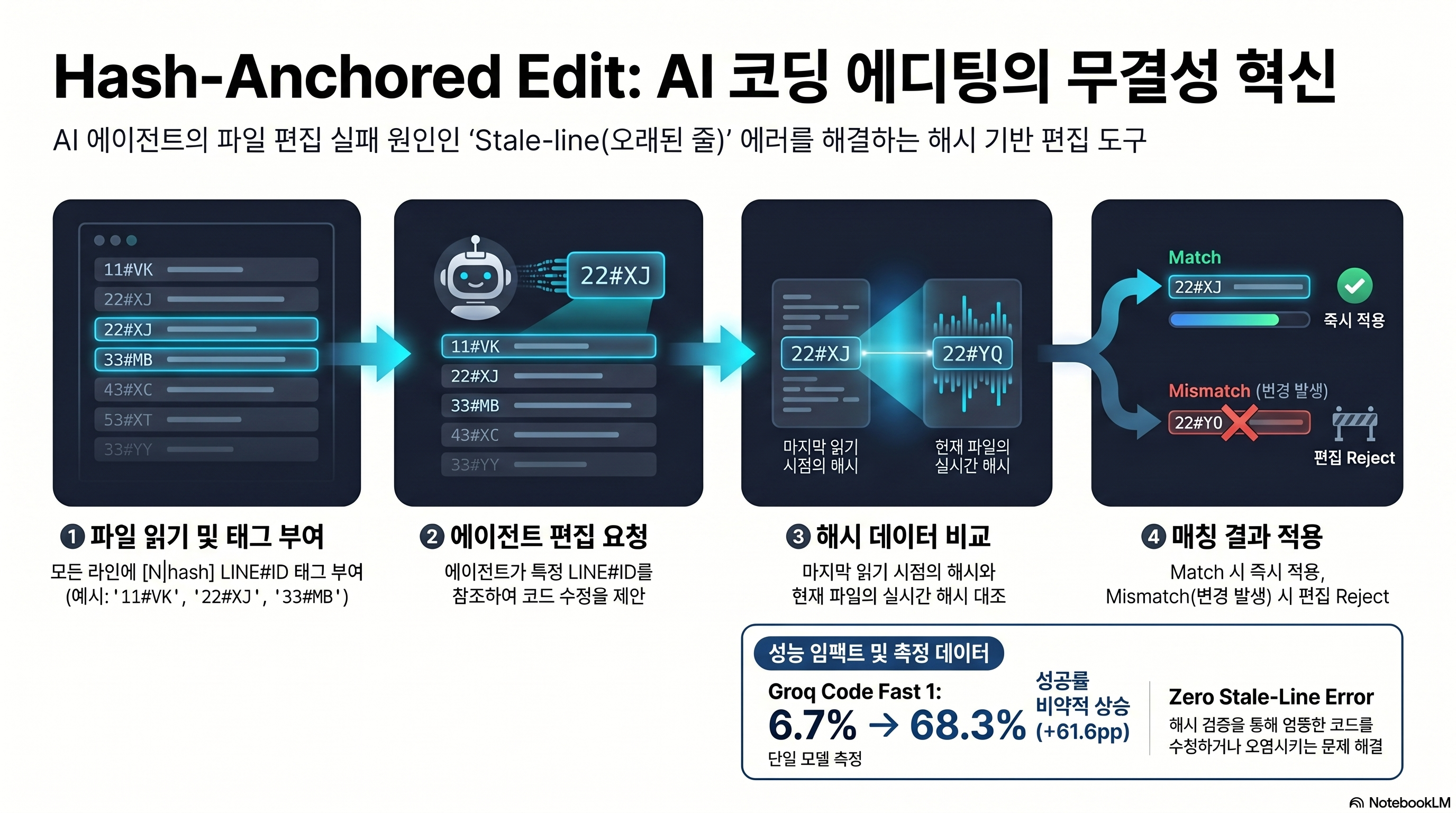

5. Hash-Anchored Edit reduces stale-line errors to 0%?

The function with the strongest marketing copy in OmO is Hash-Anchored Edit. This is “Prevents accidents where patches are installed in the wrong location even if the file changes while the agent is not looking.” It’s a mechanism.

How it works: LINE#ID tag

When OmO delivers a file to the agent, it attaches a LINE#ID tag in the form of [N|hash] to every line. It has the following form:

11#VK: function hello() {

22#XJ: return "world";

33#MB: }When the agent requests editing, OmO compares the hash of the current file with the hash of the last read. If a mismatch is found, the edit is rejected and the agent is forced to say “The file has changed, read it again”. The pattern itself, the maintainer said, was inspired by Can Bölük’s article “The Harness Problem.” The general theory of harness design has been covered in [AI apps are completed with harnesses, not prompts] (/en/ai/harness-engineering-ai-apps).

Official Bench: Only Grok Code Fast 1 was measured

OmO’s official bench was measured using the Grok Code Fast 1 model, and the success rate, which was 6.7% before Hash-Anchored Edit was activated, rose to 68.3% after activation. It is an increase of 61.6 points. These are impressive numbers, but there is one limitation. DeepWiki states in the original text as follows:

“The documentation does not provide success rate data for other models (GPT, Claude, Gemini).”

(Source: DeepWiki oh-my-openagent)

In other words, there is no official measurement of the success rate in GPT-5.4·Claude Opus·Gemini 3.1 Pro. The expression “stale-line 0%” clearly points out that it is self-measurement and marketing copy and there is no external verification yet.

Why it’s still worth it

Even considering this limitation, Hash-Anchored Edit has great significance in a multi-agent environment. In situations where multiple agents are touching the same file at the same time, stale-line errors are common. If one agent edits line 50 while another agent deletes line 30, the first agent’s patch may end up in the wrong location. Hash-Anchored Edit rejects this case as a mismatch. Although there are no quantitative figures other than the Grok bench, there is sufficient qualitative evidence to say “The more simultaneous edits, the greater the effect.”

6. After using it for a month, what was the limit?

This is the main point of the practical review. I summarized the limitations I encountered while running OmO for a month into 6 points.

Limit 1: $438 infinite loop billing incident (issue #2571)

The biggest incident is issue #2571 reported on 2026-03-14 (Source: GitHub issue #2571).

User report summary: Gemini was stuck in an infinite loop for 3 hours and 32 minutes, turning 809 times in a row. All turns ended with tool_calls, and the user comment “The loop only stopped because the parent session was manually terminated” remains. The UI display cost was $168.65, but the actual bill differed by about $326, and the combined user loss amounted to $438. There were three accumulated defects: absence of max-step, silent model routing (no notification of automatic conversion from GPT-5.4 → Gemini 3.1 Pro), and cost display bug.

Although it has been closed with PR #2590/#2575, it is a textbook idea showing how to design a max-step guard in multi-agent automation. If you want to use OmO for operation, it is recommended to add an additional layer of alert/limit guard externally.

Limitation 2: OpenCode 1.4.0 compatibility (issue #3220)

Issue #3220 reported on 2026-04-08 was a symptom of all OmO agents disappearing when upgrading to OpenCode 1.4.0. Although it is closed as Closed, it still shows the dependency structure that the minor version of OpenCode’s main branch leads to OmO. To use OmO, OpenCode version pinning is virtually essential.

Limitation 3: TUI Freeze (issue #3143, Open unresolved)

Issue #3143 reported on 2026-04-05 is a v3.15.2 TUI freeze and blank screen issue and is still open as of 2026-04-30. The only workaround is the v3.14.0 downgrade. In terms of stability, this is the most direct basis for delaying the introduction of Korean production.

Limitation 4: Glukhov measure — 3x tokens, lower pass rate

Let’s revisit the Glukhov comparison discussed in H2-3. In single document migration, OmO recorded 3.5 times lower requests, 3 times lower tokens, and 3.9 pp lower pass rate than pure OpenCode. This is a clear rebuttal to the assumption that “OmO is good for any task”.

Limitation 5: Memory pressure on OpenCode TUI itself

It’s not just OmO’s problem, but OpenCode TUI often occupies 1GB+ of RAM. According to Hacker News user reports, OOM occurs frequently on $7/year VPS. There are few problems on developer PCs, but they are heavy in self-hosting/CI environments.

Limitation 6: Absence of status signal between agents

Issue #1980 points out the absence of operational status signals between Hephaestus and Atlas. When two agents touch the same file at the same time, they often crash because they are not aware of each other’s progress. Hash-Anchored Edit prevents some, but workflow unit locks and state brokers are still empty.

Pros

- + Tremendous time savings in large-scale repetitive tasks with ulw compared to OpenCode single agent (8,000 ESLint, 45k line Tauri migration)

- + The 12+ agent 4-group division of labor structure and model routing are pre-designed, so you don’t have to make your own harness.

- + Reduce the risk of multi-agent stale-line accidents with Hash-Anchored Edit

- + Good community accessibility with Korean maintainer and Korean README/Threads

- + A realistic alternative for external client users who bypassed Claude Code Max by blocking Anthropic OAuth

Cons

- − $438 Infinite loop billing accident case — cumulative result of absence of max-step guard + silent model routing

- − In a single simple task, 3 times more tokens and 3.9pp lower pass rate (Glukhov measurement)

- − ulw activation itself consumes 150,000 to 250,000 tokens — sledgehammer for short tasks

- − Agent disappears and TUI freeze issue repeats with each OpenCode 1.4.0/1.5.x minor upgrade

- − Hash-Anchored Bench officially measures only one model, Grok Code Fast 1. No GPT/Claude/Gemini data

- − License SUL-1.0 — Commercial redistribution restricted. The notation ‘MIT’ in some Korean materials is an error.

7. How should I look at licenses, telemetry, and costs?

If you want to use OmO in your company, you must check the license and telemetry. There is a lot of incorrect information in the Korean secondary data, so we will correct it.

License correction: It’s SUL-1.0, not MIT

PyTorchKR · Some Korean blogs refer to OmO as “MIT License”, but this is old information from the oh-my-opencode era. As of 2026-04-30, the GitHub license is SUL-1.0 (Sustainable Use License) (Source: LICENSE.md).

The key provisions of SUL-1.0 are as follows.

- Scope of use: Internal business/non-commercial/personal use only

- Redistribution: Only available in non-commercial/free form.

- License Notice: No modification or deletion permitted.

- Automatic termination for violation: Automatic termination of license after 30-day correction period.

The form in which a company can “Integrate OmO into SaaS products and sell them for a fee” is clearly limited. Using it as an in-house development tool is generally within the permitted range, but legal review is essential.

Telemetry: Default ON, opt-out possible

OmO enables PostHog-based telemetry by default. It is anonymously aggregated based on the hashed installation identifier, and the collection targets are as follows (Source: privacy-policy.md).

- Collection:

run_started/completed/failed,install_*,plugin_loaded,omo_daily_active, package version/runtime meta - Does not collect: prompt body, source files, repo content, access token, API key, raw hostname

Opting out can be done with a single environment variable.

export OMO_SEND_ANONYMOUS_TELEMETRY=0

# or disable PostHog itself

export OMO_DISABLE_POSTHOG=1If telemetry itself needs to be blocked due to company policy, add the above line to .bashrc/.zshrc or place it in the CI environment variable. If you want to monitor token usage, it is helpful to also introduce the token measurement pattern covered in [How to reduce Claude Code tokens by 71 times — Graphify practical deployment 2026] (/en/ai/graphify-guide).

Cost: Actual operating cost of “bundle price”

The recommended bundle of $49 to $59 is just the total subscription fee. The actual token cost is separate. Jeong Jeong-il’s (jeongil.dev) critique hits this point directly.

“Burn $15-20 in 30 minutes when switching APIs. There are also cases where the amount was more than $3,650 per month.”

(Source: Jeongil Jeongil.dev)

In other words, the token cost is overwhelmingly greater than the subscription fee. To introduce it into operation, it is recommended that daily/weekly token limits and max-step guards be installed externally. issue #2571 The accident could have been prevented with just one max-step guard.

8. How do you evaluate the Korean community?

As OmO is a Korean maintainer project, the response from the Korean community is fast and diverse. There are both favorable reviews and criticisms, so I quote them in a balanced manner.

- "What was attractive was that each agent ran tasks in parallel in the background." — @takealittletime velog (2026-01-12)

- "An experiment that was conducted in the corner of the room became a plug-in, and a component in a product that created a large fan base." — Kim Yeon-gyu's own Threads (2026-01-09, 33.1K views)

- "200,000 downloads in one month, Korean developer captivating the world." — These days IT Wishket headlines

- "It keeps leaking into Samcheonpo during implementation. Test based on your own broad standards. Forgetting the context in a new session." — @ziczin7176 velog (2026-01-09)

- "Authentication issues with every update, opencode eventually bypasses no matter which LLM is used. It is difficult to use the free model in large corporate collaborations. There is a large difference in performance between updates." — @nomadjun195 Threads (2026-01-29)

- "For now, I recommend starting with Agent Teams. Since it is an official function, there is no risk of ban." — Jeong Jeong-il jeongil.dev (2026-02-09)

Favorite point: “Parallel execution and Korean-friendliness”

@takealittletime velog article is a representative example. The evaluation that “Attractive is that each agent runs tasks in parallel and in the background.” clearly points out OmO’s differentiation (Source: @takealittletime velog). Abundant Korean language resources such as Korean README, Korean Threads posts, and PyTorchKR Korean discussions (2026-01-05) are also beginner-friendly (Source: PyTorchKR).

Criticism Point 1: Context/Session Management

@ziczin7176 The velog article (2026-01-09) clearly points out the pain point. “It keeps leaking to Samcheonpo during implementation.”, “Test based on your own broad standards”, “Forgetting the context in a new session.” The weakness of context transfer between sessions in a multi-agent environment is a structural limitation of OmO.

Criticism point 2: Update deviation and collaboration environment

@nomadjun195 Threads article (2026-01-29) presented three criticisms (Source: @nomadjun195 Threads).

- Authentication issues are repeated with each update.

- OpenCode ultimately has a bypass nature no matter which LLM is used. In large corporate collaboration, using the free model is difficult in terms of ToS and security.

- There is a large difference in performance between updates.

Criticism Point 3: Jeong Jeong-il’s Balance Criticism

Jeong Jeong-il’s (jeongil.dev) article is the most structured among Korean language analyses. “Examples of earning more than $3,650 per month” and “Burn $15-20 in 30 minutes when switching APIs” along with cost warnings and the conclusion “For now, I recommend starting with Agent Teams. There is no ban risk as it is an official function.” are the most realistic guidelines for Korean production users (Source: jeongil.dev).

9. Is it worth introducing it now or should I wait a little longer?

The data up to this point is organized into a decision matrix for each persona.

Persona-specific decision matrix

| persona | recommended | reason |

|---|---|---|

| Korean production backend operator | atmosphere | TUI frieze (#3143 Open) + absence of max-step guard + SUL-1.0 commercial limit |

| Side project/individual developer | trial | Overwhelming effect in large-scale repetitive tasks such as ESLint 8,000 lines and Tauri 45k lines |

| Multi-model experiment researcher | trial | Sisyphus·Prometheus·Oracle routing is utilized as is. Best for model comparison experiments |

| Claude Max heavy user (Korea) | danger | Anthropic OAuth blocking + risk of account suspension when bypassed (Apiyi) |

| Cost sensitive + short task oriented | It's not good | ulw 15,000 - 25,000 token overhead + 3x tokens in a single operation (Glukhov) |

| Beginner (no Claude Code experience) | conditional | OpenCode + model key setup learning curve is steep. Recommended starting from Pro/Max |

I recommend it to people like this

- Developers with large-scale refactoring planned as a side project: The ulw case is tailored for exactly this task.

- Multi-model/harness learning type senior: The 12+ agent 4-group structure itself is a good learning material.

- Users who already use OpenCode: There is almost no barrier to entry, and it is worth choosing to use ulw after a month of trying.

Conversely, if the following applies, watch for one more quarter.

- Korean production backend/finance: SUL-1.0, TUI freeze, and absence of max-step guard are all operational risks.

- Heavy users whose work is stable with Claude Max: The motivation to reduce costs is weak, and only new ToS risks are borne.

- For users already familiar with GPT-5.5’s coding mode: The single model flow is simpler. Comparison data was covered in GPT-5.5 Summary: Performance·Benchmark·Price·Response (2026).

conclusion

Key takeaways

Oh My OpenAgent is not an “AI model” but a harness that bundles 12+ agents and ulw commands on top of OpenCode. In large repetitive tasks, overwhelming cases such as ESLint 8,000, 45k line Tauri migration are produced, but in single simple tasks, counterevidence exists such as 3 times the tokens and 3.9 pp low pass rate (Glukhov). Considering the $438 infinite loop billing incident and SUL-1.0 license restrictions, it is a good time to try it now for side projects and multi-model experiments, but for Korean production and Claude Max heavy users, it is time to wait another quarter.

- Side project developer: The ulw effect is clear in massive iterative refactoring.

- Multi-agent learning type senior: 4 groups of 12+ agent pre-designs are the learning materials.

- Existing OpenCode users: The additional learning cost is small, and it is worthwhile to use only ulw.

Step 1 — First, look at the GitHub issue tracker

Check whether issue #3143 (TUI freeze) has been changed to Closed and the max-step guard PR has been merged. If the stability signal is weak, the introduction is postponed.

Phase 2 — One week pilot run on side projects

Install OpenCode + OmO in a side repo separate from the main work, and try ulw only 1-2 times per week. The token limit is imposed externally.

Step 3 — Opt out of telemetry and review your license

If your company is considering introduction, share the OMO_SEND_ANONYMOUS_TELEMETRY=0 environment variable and SUL-1.0 license terms with the legal department.

Step 4 — Review the introduction of this work after publication of Part 2 (Installation Guide)

If Part 1 determines 'Go/No-Go', the actual installation, model key, and OpenCode setup follows Part 2. Part 2 will be published as a sequel to this article.

- oh-my-openagent GitHub repository

- SUL-1.0 License Text

- Privacy Policy (PostHog Telemetry Specification)

- GitHub issue #2571 — $438 infinite loop incident

- Glukhov — Oh My Opencode Experience Comparison Measurement

- DeepWiki oh-my-openagent

- @takealittletime velog review

- @nomadjun195 Threads criticism

- Jeongil Jeongil.dev Balanced Criticism

- PyTorchKR Korean Discussion

- nxcode opencode-alternative-2026 (Anthropic blocking report)